Digital Library[ Search Result ]

Enhancing Retrieval-Augmented Generation Through Zero-Shot Sentence-Level Passage Refinement with LLMs

Taeho Hwang, Soyeong Jeong, Sukmin Cho, Jong C. Park

http://doi.org/10.5626/JOK.2025.52.4.304

This study presents a novel methodology designed to enhance the performance and effectiveness of Retrieval-Augmented Generation (RAG) by utilizing Large Language Models (LLMs) to eliminate irrelevant content at the sentence level from retrieved documents. This approach refines the content of passages exclusively through LLMs, avoiding the need for additional training or data, with the goal of improving the performance in knowledge-intensive tasks. The proposed method was tested in an open-domain question answering (QA) environment, where it demonstrated its ability to effectively remove unnecessary content and outperform over traditional RAG methods. Overall, our approach has proven effective in enhancing performance compared to conventional RAG techniques and has shown the capability to improve RAG's accuracy in a zero-shot setting without requiring additional training data.

Political Bias in Large Language Models and its Implications on Downstream Tasks

Jeong yeon Seo, Sukmin Cho, Jong C. Park

http://doi.org/10.5626/JOK.2025.52.1.18

This paper contains examples of political leaning bias that can be offensive. Abstract As the performance of the Large Language Models (LLMs) improves, direct interaction with users becomes possible, raising ethical issues. In this study, we design two experiments to explore the diverse spectrum of political stances that an LLM exhibits and how these stances affect downstream tasks. We first define the inherent political stances of the LLM as the baseline and compare results from three different inputs (jailbreak, political persona, and jailbreak persona). The results of the experiments show that the political stances of the LLM changed the most with the jailbreak attack, while lesser changes were observed with the other two inputs. Moreover, an experiment involving downstream tasks demonstrated that the distribution of altered inherent political stances can affect the outcome of these tasks. These results suggest that the model generates responses that align more closely like its inherent stance rather than the user’s intention to personalize responses. We conclude that the intrinsic political bias of the model and its judgments can be explicitly communicated to users.

Search

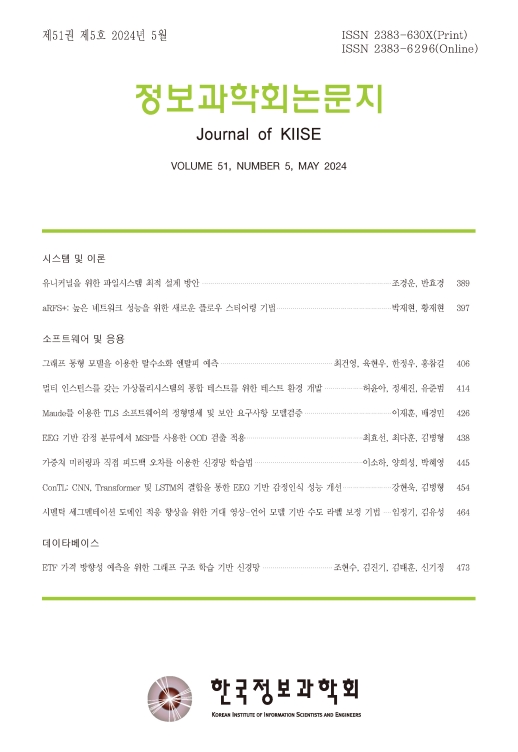

Journal of KIISE

- ISSN : 2383-630X(Print)

- ISSN : 2383-6296(Electronic)

- KCI Accredited Journal

Editorial Office

- Tel. +82-2-588-9240

- Fax. +82-2-521-1352

- E-mail. chwoo@kiise.or.kr