Digital Library[ Search Result ]

Korean Text Summarization using MASS with Copying and Coverage Mechanism and Length Embedding

Youngjun Jung, Changki Lee, Wooyoung Go, Hanjun Yoon

http://doi.org/10.5626/JOK.2022.49.1.25

Text summarization is a technology that generates a summary including important and essential information from a given document, and an end-to-end abstractive summarization model using a sequence-to-sequence model is mainly studied. Recently, a transfer learning method that performs fine-tuning using a pre-training model based on large-scale monolingual data has been actively studied in the field of natural language processing. In this paper, we applied the copying mechanism method to the MASS model, conducted pre-training for Korean language generation, and then applied it to Korean text summarization. In addition, coverage mechanism and length embedding were additionally applied to improve the summarization model. As a result of the experiment, it was shown that the Korean text summarization model, which applied the copying and coverage mechanism method to the MASS model, showed a higher performance than the existing models, and that the length of the summary could be adjusted through length embedding.

Resolution of Answer-Repetition Problems in a Generative Question-Answering Chat System

http://doi.org/10.5626/JOK.2018.45.9.925

A question-answering (QA) chat system is a chatbot that responds to simple factoid questions by retrieving information from knowledge bases. Recently, many chat systems based on sequence-to-sequence neural networks have been implemented and have shown new possibilities for generative models. However, the generative chat systems have word repetition problems, in that the same words in a response are repeatedly generated. A QA chat system also has similar problems, in that the same answer expressions frequently appear for a given question and are repeatedly generated. To resolve this answer-repetition problem, we propose a new sequence-to-sequence model reflecting a coverage mechanism and an adaptive control of attention (ACA) mechanism in a decoder. In addition, we propose a repetition loss function reflecting the number of unique words in a response. In the experiments, the proposed model performed better than various baseline models on all metrics, such as accuracy, BLEU, ROUGE-1, ROUGE-2, ROUGE-L, and Distinct-1.

Search

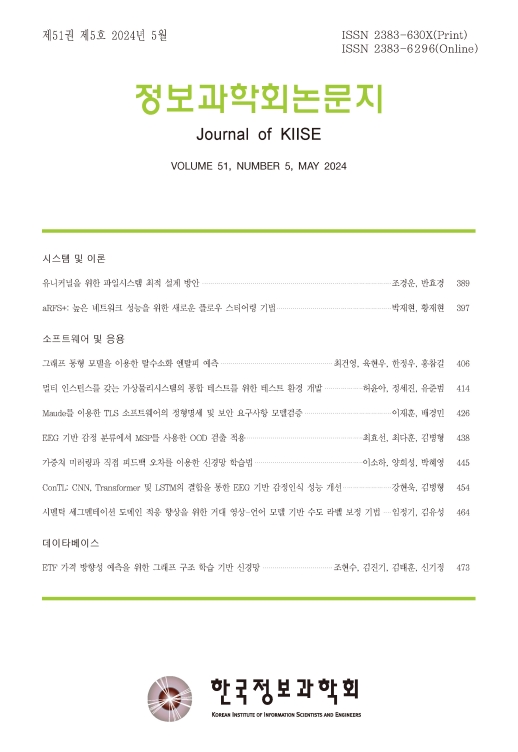

Journal of KIISE

- ISSN : 2383-630X(Print)

- ISSN : 2383-6296(Electronic)

- KCI Accredited Journal

Editorial Office

- Tel. +82-2-588-9240

- Fax. +82-2-521-1352

- E-mail. chwoo@kiise.or.kr